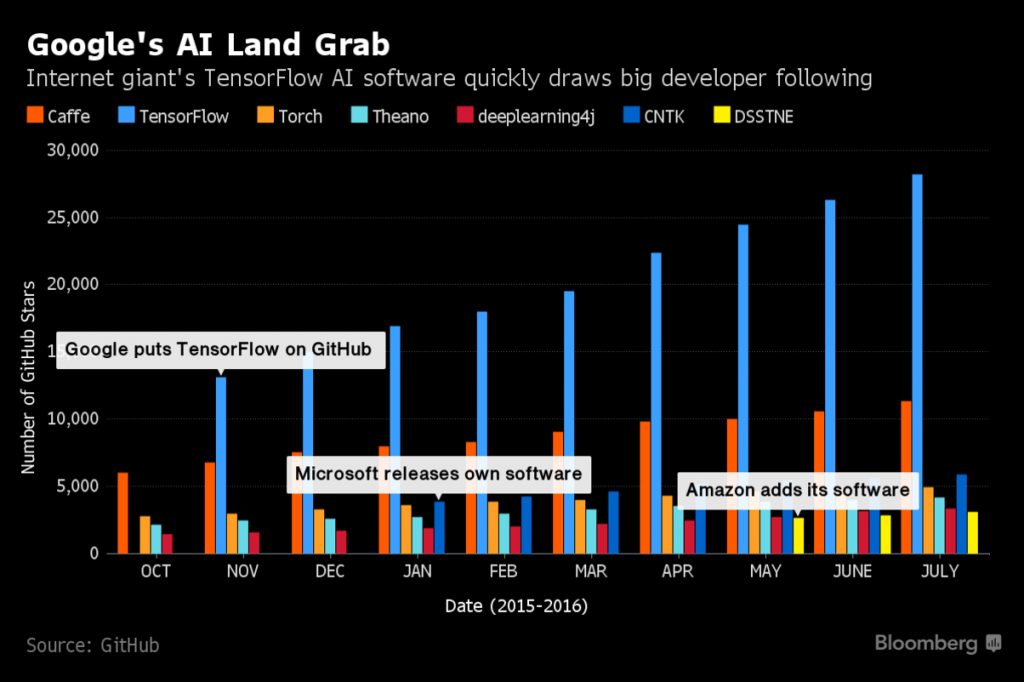

Google have hosted their first Tensorflow Dev Summit several days ago and they have announced the release of TensorFlow version 1.0. Infact, Tensorflow has become so popular, it is now the number one Deep learning library (API) on Git Hub and has remained so ever since it’s initial release V0.0 in November 2015.

So what is all the craze about?

TensorFlow is an open source framework for deep learning. Deep learning technology is responsible for all the recent advances in Speech Recognition Systems. Furthermore, Google has used the technology in their Photos (Recognition), Gmail (such as anti spam filtering), search engines, adverts to name just a few. They have brought all this research and experience from DistBelief V2 into TensorFlow open source library, free for all app developers to use across the world.

Google have now added many new tools as part of their framework, which includes the ability to train neural networks on a whole host of devices, including servers, mobiles and distributed systems. They have added traditional machine learning tools such as K-means and support vector machines according to Rajat Monga. They have also integrated the Kera Python-based library, which was introduced to ease the Theano deep learning framework and allow more advanced features like Hyper Parameters.They are now offering the Cloud Machine Learning service which makes it possible to run TensorFlow on Google’s cloud infrastructure.

Historically, one of the problems with neural networks were with processing speed. However, with version three of Google’s inception neural network module they can now runs faster than ever. Infact, neural nets can now be processed up to 58 times faster with their GPU techology.

As fore-mentioned, TensorFlow is able to run on a whole host of processors. They have being working closely with optimal Hexagonal digital signal processors which comes on Qualcomm’s Snapdragon 820 mobile ship and its Dragonboard 820c board which is more optimal than regular Processors that weren’t specifically designed for Machine Learning.

A full comprehensive list of the updates can be found in the release notes below: https://github.com/tensorflow/tensorflow/releases/tag/v1.0.0

So what exactly is deep learning?

Deep learning is a branch of machine learning which is loosely inspired by how our brain works. Before TensorFlow, Google worked on the Google Brain Project using the predecessor of TensorFlow i.e. a machine learning library they developed called DistBelief. Initially, DistBelief started simply as research project, but after much success they soon collaborated with 50 different teams at Google and deployed the systems into real commercial products. DistBelief’s deep learning neural networks have now been included in all of Google’s major products, including Google Search, Google Voice Search, advertising, Google Photos, Google Maps, Google Street View, Google Translate, and YouTube.

Google assigned the most prominent computer scientists of the field of machine learning, such as Geoffrey Hinton (who created the Restricted Boltzman Machine and popularised Convolutional Networks with ImageNet) and Jeff Dean. In 2009, the team led by Hinton was able to substantially reduce the number of errors in neural networks in DistBelief; this breakthrough was made possible by Hinton’s scientific breakthroughs in generalised backpropagation which directly led to reducing the number of errors in Google’s speech recognition software by at least 25 percent. Furthermore, they have since simplified and refactorored the codebase of DistBelief into a much faster and robust application-grade library, which became ‘TensorFlow’.

Since TensorFlows inception in 2015 the broader community has added many features including distributed training and support for the Hadoop Distributed File System (HDFS)

Greg Corrado – Senior Research Scientist at Google says “it no longer makes sense to have separate tool for research in machine learning and people who are developing real products. TensorFlow provides one set of tools to try out your ideas and if they work they can be moved directly to products without having to rewrite code. TensorFlow allows collaboration and communication between researchers. It allows a researcher in one location to develop and explore it and send their code on the web (Github being the most popular open source repository) so that someone else can use it on the other side of the world”

The project team’s vision was to build a system which simplified the deployment of large scale machine learning models to a variety of hardware ups, ranging from smart phones, single severs and distributed systems such as 100 machines with thousands of GPUs. TensorFlow supports all of the main neural network models ranging from Deep Belief Nets, Convolutional Nets, Deep Autoencoders like the Restricted Boltzman Machine, Recurrent Nets, Recurrent Tensor Nets to name a few of the most popular models.

Their API is compatible with many programming languages including, but not limited to: C++, Python and have since been interfaced with Lua, Go, Javascript and Ruby (Swing Interface), Haskel to name a few. Tensor flow is based on the concept of a Computational Graph where nodes represent either persistent data or math operations. The way it allows distributed systems is due to Iterative Map Reduce using data parallelism (part of the Hadoop Framework).

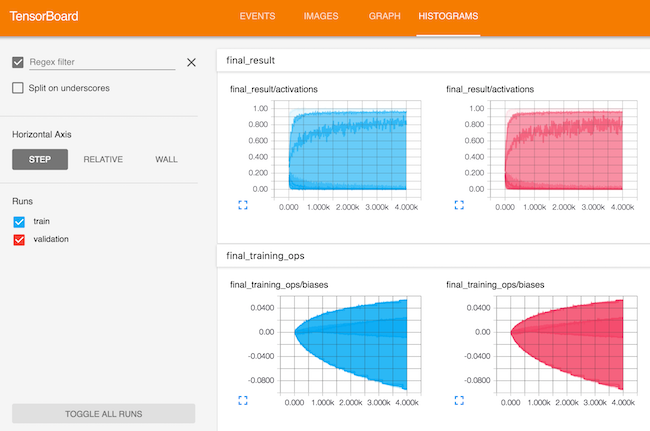

TensorBoard is a nice feature, a visualisation tool for network architecture and performance the tool allows you to zoom in, and visualise different levels of a network as well as view different summary level metrics and changes over time throughout the training process.

Machine Learning is an exciting expanding field which has never been so popular and applicable, there are so many more features and topics to discuss that is beyond the scope of this article.

For full videos for the entire TensorFlow Dev Summit 2017 see the link below for the latest additions to TensorFlow v1.0